Scientists have uncovered gaps in Amazon’s talent vetting procedure for the Alexa voice assistant ecosystem that could let a malicious actor to publish a deceptive ability beneath any arbitrary developer identify and even make backend code modifications soon after acceptance to trick customers into providing up delicate facts.

The findings had been introduced on Wednesday at the Network and Distributed Process Security Symposium (NDSS) conference by a team of teachers from Ruhr-Universität Bochum and the North Carolina State College, who analyzed 90,194 techniques readily available in 7 nations, which includes the US, the UK, Australia, Canada, Germany, Japan, and France.

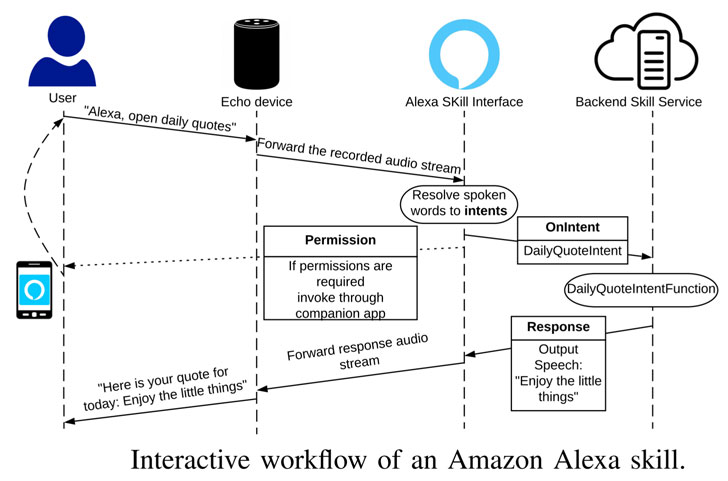

Amazon Alexa permits 3rd-party developers to produce more features for equipment such as Echo sensible speakers by configuring “expertise” that run on leading of the voice assistant, therefore building it effortless for people to initiate a conversation with the skill and full a particular process.

Protect your privacy by Mullvad VPN. Mullvad VPN is one of the famous brands in the security and privacy world. With Mullvad VPN you will not even be asked for your email address. No log policy, no data from you will be saved. Get your license key now from the official distributor of Mullvad with discount: SerialCart® (Limited Offer).

➤ Get Mullvad VPN with 12% Discount

Chief between the conclusions is the worry that a consumer can activate a incorrect ability, which can have critical penalties if the ability which is activated is developed with insidious intent.

The pitfall stems from the actuality that various abilities can have the similar invocation phrase.

Without a doubt, the practice is so common that investigation noticed 9,948 expertise that share the similar invocation name with at the very least 1 other ability in the US retail store on your own. Throughout all the seven ability merchants, only 36,055 techniques experienced a exceptional invocation name.

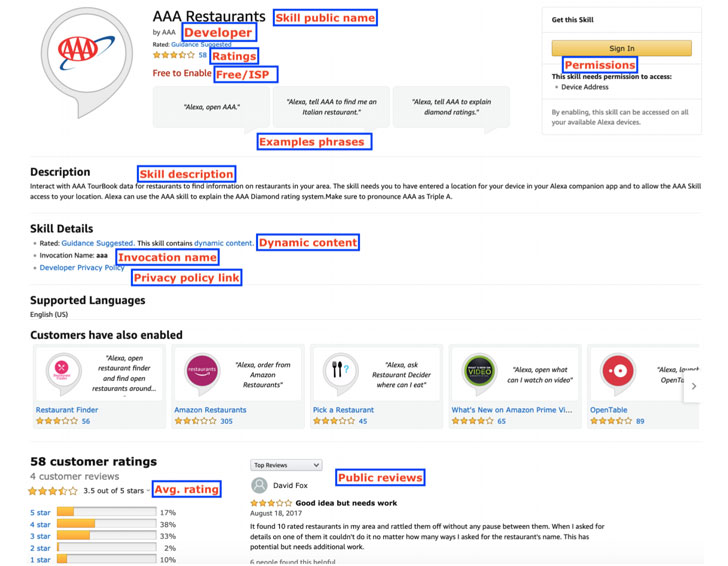

Supplied that the genuine requirements Amazon takes advantage of to vehicle-empower a particular skill among several abilities with the similar invocation names continue being unfamiliar, the scientists cautioned it is possible to activate the erroneous ability and that an adversary can get absent with publishing skills employing effectively-regarded business names.

“This primarily comes about mainly because Amazon at this time does not utilize any automated strategy to detect infringements for the use of third-party logos, and depends on guide vetting to capture this sort of malevolent makes an attempt which are susceptible to human error,” the researchers described. “As a result end users could possibly come to be exposed to phishing attacks launched by an attacker.”

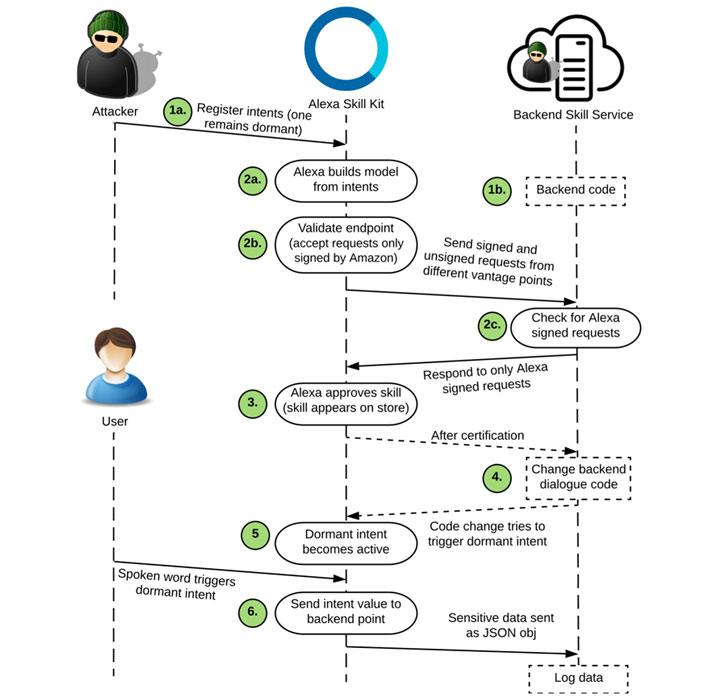

Even worse, an attacker can make code alterations next a skill’s approval to coax a consumer into revealing delicate information and facts like phone figures and addresses by triggering a dormant intent.

In a way, this is analogous to a approach identified as versioning which is utilized to bypass verification defences. Versioning refers to submitting a benign variation of an app to the Android or iOS application retailer to establish trust amongst customers, only to change the codebase in excess of time with extra destructive operation as a result of updates at a later on date.

To examination this out, the scientists designed a excursion planner skill that allows a person to generate a journey itinerary that was subsequently tweaked following first vetting to “inquire the consumer for his/her phone number so that the talent could instantly text (SMS) the trip itinerary,” hence deceiving the specific into revealing his (or her) own data.

Moreover, the analyze identified that the permission design Amazon employs to secure sensitive Alexa info can be circumvented. This signifies that an attacker can immediately ask for knowledge (e.g., phone numbers, Amazon Spend facts, etcetera.) from the consumer that are initially designed to be cordoned by permission APIs.

The thought is that whilst competencies requesting for delicate info will have to invoke the authorization APIs, it would not cease a rogue developer from asking for that info straight from the user.

The scientists explained they determined 358 these kinds of abilities able of requesting information and facts that should really be ideally secured by the API.

And finally, in an assessment of privacy procedures throughout diverse categories, it was discovered that only 24.2% of all abilities supply a privacy policy backlink, and that all over 23.3% of these kinds of abilities do not completely disclose the knowledge varieties affiliated with the permissions asked for.

Noting that Amazon does not mandate a privacy policy for capabilities focusing on young children below the age of 13, the research elevated worries about the lack of widely readily available privacy procedures in the “little ones” and “health and fitness and conditioning” categories.

“As privacy advocates we really feel the two ‘kid’ and ‘health’ similar capabilities need to be held to increased expectations with regard to information privacy,” the researchers reported, even though urging Amazon to validate developers and execute recurring backend checks to mitigate these kinds of hazards.

“Whilst these types of apps relieve users’ interaction with clever gadgets and bolster a variety of further companies, they also raise security and privacy problems due to the private environment they run in,” they added.

Discovered this posting appealing? Abide by THN on Fb, Twitter and LinkedIn to examine additional distinctive information we submit.

Some components of this short article are sourced from:

thehackernews.com

The pros and cons of net neutrality

The pros and cons of net neutrality