Apple on Thursday claimed it is introducing new little one protection characteristics in iOS, iPadOS, watchOS, and macOS as component of its initiatives to restrict the distribute of Little one Sexual Abuse Material (CSAM) in the U.S.

To that effect, the iPhone maker mentioned it intends to begin consumer-aspect scanning of photographs shared through each and every Apple system for acknowledged youngster abuse material as they are staying uploaded into iCloud Images, in addition to leveraging on-system machine mastering to vet all iMessage images despatched or been given by slight accounts (aged underneath 13) to alert moms and dads of sexually specific pictures in the messaging system.

Also, Apple also plans to update Siri and Search to phase an intervention when users try out to conduct lookups for CSAM-linked topics, alerting the “curiosity in this topic is hazardous and problematic.”

Protect your privacy by Mullvad VPN. Mullvad VPN is one of the famous brands in the security and privacy world. With Mullvad VPN you will not even be asked for your email address. No log policy, no data from you will be saved. Get your license key now from the official distributor of Mullvad with discount: SerialCart® (Limited Offer).

➤ Get Mullvad VPN with 12% Discount

“Messages works by using on-unit machine understanding to examine picture attachments and decide if a image is sexually explicit,” Apple observed. “The function is designed so that Apple does not get accessibility to the messages.” The characteristic, referred to as Communication Security, is claimed to be an choose-in location that need to be enabled by dad and mom via the Household Sharing feature.

How Boy or girl Sexual Abuse Materials is Detected

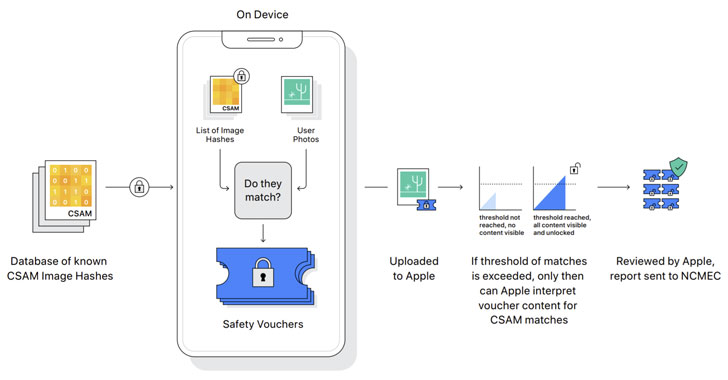

Detection of recognised CSAM illustrations or photos includes carrying out on-device matching utilizing a databases of recognized CSAM picture hashes presented by the Nationwide Middle for Lacking and Exploited Small children (NCMEC) and other youngster safety businesses before the images are uploaded to the cloud. “NeuralHash,” as the method is referred to as, is powered by a cryptographic technology known as personal established intersection. However, it is worth noting that although the scanning comes about routinely, the aspect only works when iCloud photo sharing is turned on.

What is additional, Apple is predicted to use an additional cryptographic basic principle referred to as threshold solution sharing that lets it to “interpret” the contents if an iCloud Pictures account crosses a threshold of recognised little one abuse imagery, pursuing which the articles is manually reviewed to affirm there is a match, and if so, disable the user’s account, report the content to NCMEC, and go it on to legislation enforcement.

Researchers Specific Worry About Privacy

Apple’s CSAM initiative has prompted security researchers to convey anxieties that it could undergo from a mission creep and be expanded to detect other varieties of content that could have political and protection implications, or even body innocent people today by sending them harmless but malicious images designed to look as matches for baby porn.

U.S. whistle-blower Edward Snowden tweeted that, inspite of the project’s fantastic intentions, what Apple is rolling out is “mass surveillance,” though Johns Hopkins College cryptography professor and security qualified Matthew Inexperienced mentioned, “the trouble is that encryption is a effective instrument that provides privacy, and you can not actually have powerful privacy although also surveilling each and every image anybody sends.”

Apple now checks iCloud information and photos sent above email from recognized boy or girl abuse imagery, as do tech giants like Google, Twitter, Microsoft, Fb, and Dropbox, who utilize equivalent graphic hashing techniques to appear for and flag likely abuse content, but Apple’s try to walk a privacy tightrope could renew debates about weakening encryption, escalating a extensive-running tug of war around privacy and policing in the digital age.

The New York Periods, in a 2019 investigation, disclosed that a file 45 million on the net images and movies of little ones being sexually abused ended up claimed in 2018, out of which Fb Messenger accounted for virtually two-thirds, with Fb as a entire dependable for 90% of the reviews.

Apple, alongside with Facebook-owned WhatsApp, have continually resisted endeavours to deliberately weaken encryption and backdoor their techniques. That explained, Reuters reported very last year that the enterprise abandoned plans to encrypt users’ whole backups to iCloud in 2018 following the U.S. Federal Bureau of Investigation (FBI) lifted problems that performing so would impede investigations.

“Kid exploitation is a significant dilemma, and Apple is just not the to start with tech enterprise to bend its privacy-protecting stance in an try to beat it. But that decision will arrive at a substantial cost for in general user privacy,” the Electronic Frontier Basis (EFF) claimed in a statement, noting that Apple’s transfer could crack encryption protections and open the door for broader abuses.

“All it would get to widen the slender backdoor that Apple is creating is an enlargement of the machine discovering parameters to look for more types of material, or a tweak of the configuration flags to scan, not just kid’s, but anyone’s accounts. Which is not a slippery slope that is a completely designed method just ready for external force to make the slightest alter,” it extra.

The CSAM attempts are established to roll out in the U.S. in the coming months as component of iOS 15 and macOS Monterey, but it remains to be witnessed if, or when, it would be obtainable internationally. In December 2020, Fb was pressured to change off some of its youngster abuse detection instruments in Europe in reaction to latest adjustments to the European commission’s e-privacy directive that correctly ban automated techniques scanning for boy or girl sexual abuse pictures and other illegal articles with no users’ express consent.

Observed this write-up attention-grabbing? Stick to THN on Facebook, Twitter and LinkedIn to read through much more distinctive material we write-up.

Some parts of this article are sourced from:

thehackernews.com

Hackers turn to Prometheus to deliver ransomware threats to victims

Hackers turn to Prometheus to deliver ransomware threats to victims