Researchers have learned a new strategy of attack towards AI devices that aims to clog up a network and slow down processing, in a design similar to that of a denial of provider attack.

In a paper being introduced at the International Meeting on Finding out Representation, scientists from the Maryland Cybersecurity Heart have outlined how deep neural networks can be tricked by incorporating extra “sounds” to their inputs, as claimed by MIT Technology Critique.

It especially targets the growing adoption of input-adaptive multi-exit neural networks, which are made to lower carbon footprint by passing pictures by just one neural layer to see if the vital threshold to accurately report what the impression incorporates has been achieved.

Protect your privacy by Mullvad VPN. Mullvad VPN is one of the famous brands in the security and privacy world. With Mullvad VPN you will not even be asked for your email address. No log policy, no data from you will be saved. Get your license key now from the official distributor of Mullvad with discount: SerialCart® (Limited Offer).

➤ Get Mullvad VPN with 12% Discount

In a classic neural network, the picture would be handed by way of each and every layer before a conclusion is drawn, frequently building it unsuitable for smart devices or similar technology that demands speedy answers employing low strength usage.

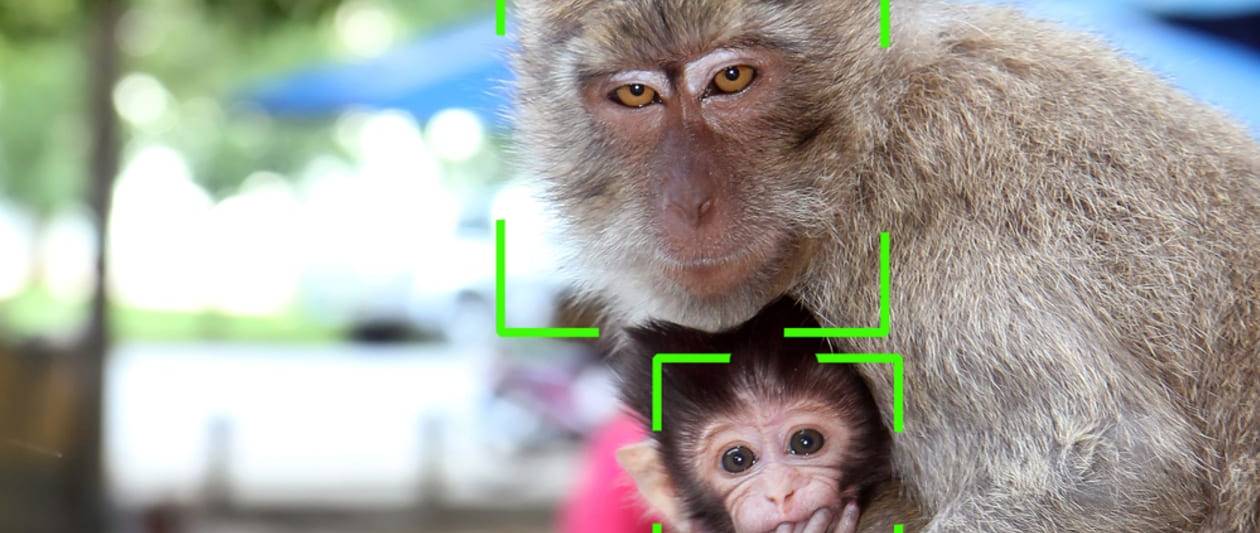

The researchers located that by just including extra complication to illustrations or photos, this kind of as slight track record sounds, bad lighting, or little objects that obscure the major issue, the enter-adaptive model views these visuals as remaining much more challenging to analyse and assigns a lot more computational sources as a result.

The researchers experimented with a circumstance whereby hackers had whole details about the neural network and identified it could be made use of to max out its electricity merchants. Nevertheless, even when the simulation assumed attackers had only restricted details about the network, they had been nonetheless able to gradual down processing and improve strength usage by as considerably as 80%.

What’s more, these attacks transfer well across various forms of neural networks, in accordance to the researchers, who also warned that an attack applied for one impression classification procedure is more than enough to disrupt numerous other individuals.

Professor Tudor Dumitraş, the project’s guide researcher, mentioned that extra do the job was needed to realize the extent to which this sort of risk could create problems.

“What’s crucial to me is to carry to people’s consideration the actuality that this is a new menace product, and these sorts of attacks can be accomplished,” Dumitraş reported.

Some components of this post are sourced from:

www.itpro.co.uk

New tsuNAME Flaw Could Let Attackers Take Down Authoritative DNS Servers

New tsuNAME Flaw Could Let Attackers Take Down Authoritative DNS Servers