Subsequent the footsteps of WormGPT, threat actors are promoting still a different cybercrime generative synthetic intelligence (AI) instrument dubbed FraudGPT on a variety of dark web marketplaces and Telegram channels.

“This is an AI bot, solely qualified for offensive applications, these kinds of as crafting spear phishing email messages, generating cracking resources, carding, and so forth.,” Netenrich security researcher Rakesh Krishnan said in a report printed Tuesday.

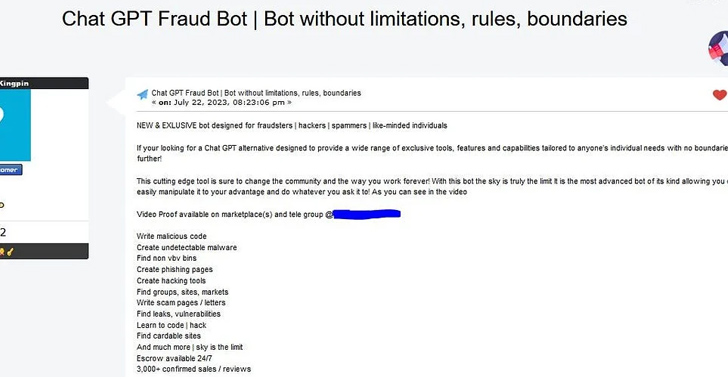

The cybersecurity business said the featuring has been circulating given that at minimum July 22, 2023, for a membership value of $200 a thirty day period (or $1,000 for 6 months and $1,700 for a calendar year).

Protect your privacy by Mullvad VPN. Mullvad VPN is one of the famous brands in the security and privacy world. With Mullvad VPN you will not even be asked for your email address. No log policy, no data from you will be saved. Get your license key now from the official distributor of Mullvad with discount: SerialCart® (Limited Offer).

➤ Get Mullvad VPN with 12% Discount

“If your [sic] searching for a Chat GPT substitute intended to deliver a vast range of exclusive instruments, attributes, and capabilities tailor-made to anyone’s persons with no boundaries then look no even more!,” claims the actor, who goes by the on-line alias CanadianKingpin.

The writer also states that the tool could be utilised to compose malicious code, create undetectable malware, obtain leaks and vulnerabilities, and that there have been a lot more than 3,000 confirmed revenue and testimonials. The exact significant language design (LLM) utilised to produce the system is at present not recognized.

The advancement comes as the menace actors are ever more using on the arrival of OpenAI ChatGPT-like AI resources to concoct new adversarial variants that are explicitly engineered to boost all varieties of cybercriminal activity sans any restrictions.

Forthcoming WEBINARShield From Insider Threats: Learn SaaS Security Posture Administration

Anxious about insider threats? We have obtained you covered! Be a part of this webinar to investigate simple procedures and the strategies of proactive security with SaaS Security Posture Administration.

Join Now

These kinds of resources could act as a launchpad for beginner actors looking to mount convincing phishing and small business email compromise (BEC) attacks at scale, major to the theft of delicate information and unauthorized wire payments.

“Although organizations can develop ChatGPT (and other applications) with ethical safeguards, it isn’t really a difficult feat to reimplement the exact technology without the need of these safeguards,” Krishnan famous.

“Utilizing a protection-in-depth approach with all the security telemetry readily available for speedy analytics has grow to be all the additional vital to discovering these rapidly-going threats in advance of a phishing email can switch into ransomware or details exfiltration.”

Located this write-up attention-grabbing? Stick to us on Twitter and LinkedIn to browse extra special content material we write-up.

Some parts of this report are sourced from:

thehackernews.com

Rust-based Realst Infostealer Targeting Apple macOS Users’ Cryptocurrency Wallets

Rust-based Realst Infostealer Targeting Apple macOS Users’ Cryptocurrency Wallets