Microsoft Copilot has been termed one of the most effective efficiency equipment on the planet.

Copilot is an AI assistant that life inside each and every of your Microsoft 365 applications — Term, Excel, PowerPoint, Groups, Outlook, and so on. Microsoft’s dream is to consider the drudgery out of day-to-day get the job done and let human beings aim on remaining resourceful dilemma-solvers.

Protect your privacy by Mullvad VPN. Mullvad VPN is one of the famous brands in the security and privacy world. With Mullvad VPN you will not even be asked for your email address. No log policy, no data from you will be saved. Get your license key now from the official distributor of Mullvad with discount: SerialCart® (Limited Offer).

➤ Get Mullvad VPN with 12% Discount

What helps make Copilot a various beast than ChatGPT and other AI applications is that it has accessibility to everything you have ever labored on in 365. Copilot can instantaneously lookup and compile knowledge from across your paperwork, shows, email, calendar, notes, and contacts.

And therein lies the dilemma for facts security groups. Copilot can obtain all the delicate knowledge that a user can access, which is generally significantly way too much. On average, 10% of a firm’s M365 info is open up to all employees.

Copilot can also swiftly produce net new delicate knowledge that need to be protected. Prior to the AI revolution, humans’ means to build and share knowledge significantly outpaced the potential to safeguard it. Just appear at details breach developments. Generative AI pours kerosine on this hearth.

There is a whole lot to unpack when it comes to generative AI as a full: model poisoning, hallucination, deepfakes, and many others. In this publish, nevertheless, I am heading to concentrate particularly on information securityand how your workforce can guarantee a safe Copilot rollout.

Microsoft 365 Copilot use situations

The use circumstances of generative AI with a collaboration suite like M365 are limitless. It can be easy to see why so several IT and security groups are clamoring to get early entry and planning their rollout plans. The efficiency boosts will be massive.

For illustration, you can open up a blank Word doc and check with Copilot to draft a proposal for a shopper based on a target info established which can include OneNote webpages, PowerPoint decks, and other business office docs. In a make a difference of seconds, you have a entire-blown proposal.

Listed here are a few far more illustrations Microsoft gave during their start function:

- Copilot can join your Groups conferences and summarize in serious time what is staying talked over, capture motion merchandise, and convey to you which issues ended up unresolved in the meeting.

- Copilot in Outlook can support you triage your inbox, prioritize e-mail, summarize threads, and deliver replies for you.

- Copilot in Excel can analyze uncooked knowledge and give you insights, tendencies, and suggestions.

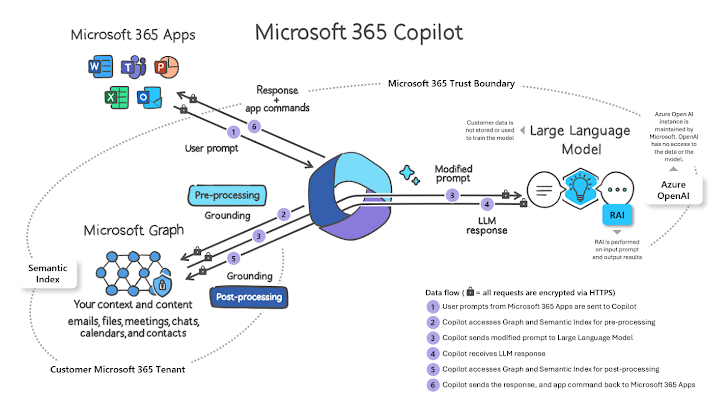

How Microsoft 365 Copilot operates

This is a very simple overview of how a Copilot prompt is processed:

- A person inputs a prompt in an application like Word, Outlook, or PowerPoint.

- Microsoft gathers the user’s enterprise context dependent on their M365 permissions.

- Prompt is sent to the LLM (like GPT4) to make a reaction.

- Microsoft performs post-processing responsible AI checks.

- Microsoft generates a response and commands back again to the M365 application.

Graphic Resource: Microsoft

Graphic Resource: Microsoft

Microsoft 365 Copilot security design

With Microsoft, there is normally an serious rigidity between efficiency and security.

This was on display screen through the coronavirus when IT teams ended up swiftly deploying Microsoft Groups with no 1st absolutely knowledge how the underlying security product labored or how in-condition their organization’s M365 permissions, groups, and hyperlink insurance policies ended up.

The good information:

- Tenant isolation. Copilot only uses details from the present-day user’s M365 tenant. The AI instrument will not area knowledge from other tenants that the user could be a guest, in nor any tenants that could be set up with cross-tenant sync.

- Teaching boundaries. Copilot does not use any of your enterprise knowledge to train the foundational LLMs that Copilot makes use of for all tenants. You should not have to be concerned about your proprietary knowledge showing up in responses to other end users in other tenants.

The lousy news:

- Permissions. Copilot surfaces all organizational data to which individual consumers have at least watch permissions.

- Labels. Copilot-created content material will not inherit the MPIP labels of the data files Copilot sourced its reaction from.

- Human beings. Copilot’s responses aren’t confirmed to be 100% factual or secure individuals must just take duty for examining AI-created information.

Let’s choose the lousy news just one by 1.

Permissions

Granting Copilot access to only what a person can accessibility would be an great strategy if organizations were being ready to quickly enforce the very least privilege in Microsoft 365.

Microsoft states in its Copilot info security documentation:

“It’s important that you might be employing the permission types readily available in Microsoft 365 services, these types of as SharePoint, to aid assure the suitable consumers or groups have the right accessibility to the appropriate information inside of your group.”

Source: Details, Privacy, and Security for Microsoft 365 Copilot

We know empirically, nonetheless, that most businesses are about as considerably from the very least privilege as they can be. Just acquire a glance at some of the stats from Microsoft’s individual State of Cloud Permissions Risk report.

This picture matches what Varonis sees when we conduct 1000’s of Details Risk Assessments for businesses using Microsoft 365 every 12 months. In our report, The Fantastic SaaS Details Publicity, we located that the regular M365 tenant has:

- 40+ million unique permissions

- 113K+ sensitive information shared publicly

- 27K+ sharing backlinks

Why does this occur? Microsoft 365 permissions are really complex. Just consider about all the strategies in which a consumer can gain accessibility to info:

- Direct person permissions

- Microsoft 365 group permissions

- SharePoint regional permissions (with tailor made concentrations)

- Visitor access

- Exterior obtain

- Public access

- Website link accessibility (anyone, org-broad, direct, visitor)

To make matters worse, permissions are largely in the fingers of stop consumers, not IT or security groups.

Labels

Microsoft relies closely on sensitivity labels to implement DLP guidelines, apply encryption, and broadly reduce information leaks. In practice, nonetheless, finding labels to perform is difficult, in particular if you depend on humans to utilize sensitivity labels.

Microsoft paints a rosy photo of labeling and blocking as the best protection net for your info. Reality reveals a bleaker scenario. As human beings make information, labeling regularly lags behind or gets to be out-of-date.

Blocking or encrypting info can incorporate friction to workflows, and labeling systems are limited to precise file kinds. The much more labels an group has, the a lot more perplexing it can turn out to be for people. This is specially powerful for more substantial corporations.

The efficacy of label-based mostly knowledge security will certainly degrade when we have AI producing orders of magnitude more details necessitating exact and automobile-updating labels.

Are my labels okay?

Varonis can validate and enhance an organization’s Microsoft sensitivity labeling by scanning, exploring, and fixing:

- Sensitive data files without the need of a label

- Delicate documents with an incorrect label

- Non-sensitive files with a delicate label

Human beings

AI can make individuals lazy. Written content generated by LLMs like GPT4 is not just superior, it is fantastic. In several conditions, the speed and the excellent far surpass what a human can do. As a consequence, individuals begin to blindly belief AI to develop protected and exact responses.

We have currently seen actual-globe eventualities in which Copilot drafts a proposal for a shopper and incorporates sensitive data belonging to a totally unique shopper. The consumer hits “ship” right after a swift look (or no glance), and now you have a privacy or details breach circumstance on your palms.

Acquiring your tenant security-completely ready for Copilot

It can be critical to have a perception of your knowledge security posture before your Copilot rollout. Now that Copilot is normally obtainable,it is a excellent time to get your security controls in location.

Varonis shields thousands of Microsoft 365 shoppers with our Details Security Platform, which delivers a authentic-time watch of risk and the skill to immediately implement minimum privilege.

We can aid you handle the most significant security challenges with Copilot with just about no handbook work. With Varonis for Microsoft 365, you can:

- Quickly find out and classify all delicate AI-generated articles.

- Immediately guarantee that MPIP labels are effectively utilized.

- Mechanically enforce the very least privilege permissions.

- Constantly observe delicate info in M365 and warn and respond to abnormal behavior.

The best way to get started is with a free risk assessment. It takes minutes to established up and in just a day or two, you will have a true-time check out of delicate facts risk.

This write-up at first appeared on the Varonis web site.

Identified this report fascinating? Abide by us on Twitter and LinkedIn to read a lot more exceptional articles we put up.

Some parts of this posting are sourced from:

thehackernews.com

15,000 Go Module Repositories on GitHub Vulnerable to Repojacking Attack

15,000 Go Module Repositories on GitHub Vulnerable to Repojacking Attack