2023 has found its honest share of cyber attacks, having said that there’s a single attack vector that proves to be far more outstanding than other individuals – non-human obtain. With 11 substantial-profile attacks in 13 months and an ever-expanding ungoverned attack surface, non-human identities are the new perimeter, and 2023 is only the beginning.

Why non-human access is a cybercriminal’s paradise

Folks usually search for the most straightforward way to get what they want, and this goes for cybercrime as very well. Risk actors look for the route of the very least resistance, and it looks that in 2023 this route was non-consumer access credentials (API keys, tokens, company accounts and secrets and techniques).

Protect and backup your data using AOMEI Backupper. AOMEI Backupper takes secure and encrypted backups from your Windows, hard drives or partitions. With AOMEI Backupper you will never be worried about loosing your data anymore.

Get AOMEI Backupper with 72% discount from an authorized distrinutor of AOMEI: SerialCart® (Limited Offer).

➤ Activate Your Coupon Code

“50% of the lively access tokens connecting Salesforce and 3rd-party apps are unused. In GitHub and GCP the quantities achieve 33%.”

These non-user entry credentials are made use of to join apps and assets to other cloud expert services. What can make them a real hacker’s aspiration is that they have no security actions like person credentials do (MFA, SSO or other IAM insurance policies), they are primarily above-permissive, ungoverned, and by no means-revoked. In reality, 50% of the active access tokens connecting Salesforce and third-party apps are unused. In GitHub and GCP the quantities attain 33%.*

So how do cybercriminals exploit these non-human accessibility credentials? To have an understanding of the attack paths, we need to have to initially fully grasp the forms of non-human entry and identities. Frequently, there are two sorts of non-human accessibility – external and internal.

Exterior non-human accessibility is created by staff connecting 3rd-party instruments and solutions to main enterprise & engineering environments like Salesforce, Microsoft365, Slack, GitHub and AWS – to streamline processes and raise agility. These connections are performed by API keys, assistance accounts, OAuth tokens and webhooks, that are owned by the 3rd-party app or company (the non-human id). With the expanding pattern of bottom-up computer software adoption and freemium cloud products and services, many of these connections are on a regular basis made by various employees with out any security governance and, even even worse, from unvetted resources. Astrix study shows that 90% of the apps linked to Google Workspace environments are non-market applications – that means they have been not vetted by an official application retail store. In Slack, the numbers achieve 77%, whilst in Github they attain 50%.*

“74% of Particular Access Tokens in GitHub environments have no expiration.”

Interior non-human obtain is similar, even so, it is established with internal obtain qualifications – also recognized as ‘secrets’. R&D groups often generate insider secrets that hook up diverse methods and companies. These insider secrets are generally scattered throughout a number of mystery managers (vaults), without any visibility for the security crew of wherever they are, if they are exposed, what they permit access to, and if they are misconfigured. In simple fact, 74% of Individual Accessibility Tokens in GitHub environments have no expiration. In the same way, 59% of the webhooks in GitHub are misconfigured – indicating they are unencrypted and unassigned.*

Routine a live demo of Astrix – a chief in non-human id security

2023’s high-profile attacks exploiting non-human accessibility

This danger is anything at all but theoretical. 2023 has witnessed some big makes slipping target to non-human accessibility exploits, with countless numbers of consumers influenced. In these attacks, attackers just take edge of exposed or stolen access qualifications to penetrate organizations’ most sensitive main programs, and in the situation of exterior obtain – get to their customers’ environments (source chain attacks). Some of these high-profile attacks include things like:

- Okta (October 2023): Attackers applied a leaked services account to access Okta’s help circumstance management program. This authorized the attackers to check out data files uploaded by a range of Okta shoppers as portion of current assist conditions.

- GitHub Dependabot (September 2023): Hackers stole GitHub Personalized Entry Tokens (PAT). These tokens have been then made use of to make unauthorized commits as Dependabot to both equally public and non-public GitHub repositories.

- Microsoft SAS Essential (September 2023): A SAS token that was posted by Microsoft’s AI researchers truly granted comprehensive access to the complete Storage account it was designed on, top to a leak of about 38TB of very sensitive data. These permissions were being available for attackers in excess of the class of extra than 2 years (!).

- Slack GitHub Repositories (January 2023): Risk actors received accessibility to Slack’s externally hosted GitHub repositories by using a “limited” amount of stolen Slack staff tokens. From there, they were ready to download personal code repositories.

- CircleCI (January 2023): An engineering employee’s personal computer was compromised by malware that bypassed their antivirus resolution. The compromised machine permitted the risk actors to accessibility and steal session tokens. Stolen session tokens give danger actors the identical entry as the account owner, even when the accounts are secured with two-factor authentication.

The influence of GenAI obtain

“32% of GenAI applications related to Google Workspace environments have extremely broad entry permissions (read through, produce, delete).”

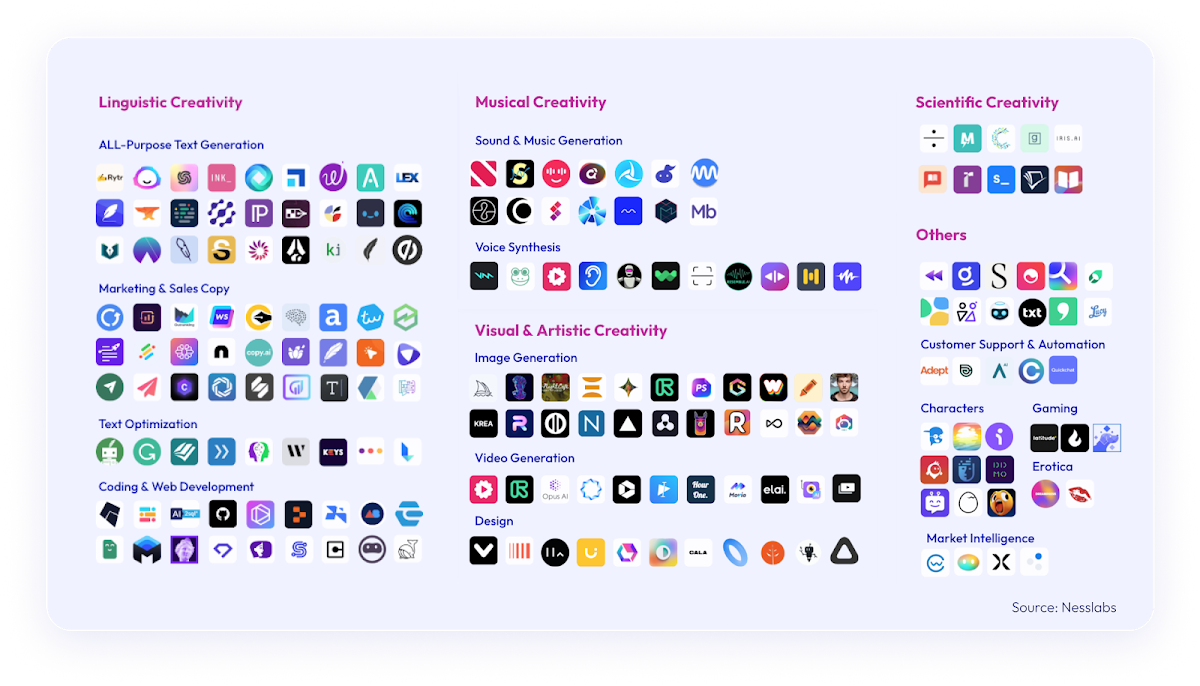

As one particular could possibly count on, the wide adoption of GenAI applications and solutions exacerbates the non-human accessibility issue. GenAI has attained massive recognition in 2023, and it is probable to only increase. With ChatGPT becoming the swiftest developing app in history, and AI-powered applications becoming downloaded 1506% more than previous year, the security risks of applying and connecting normally unvetted GenAI applications to organization core programs is previously resulting in sleepless evenings for security leaders. The numbers from Astrix Analysis present another testomony to this attack area: 32% of GenAI apps connected to Google Workspace environments have pretty wide obtain permissions (read through, create, delete).*

The dangers of GenAI obtain are hitting waves marketplace large. In a modern report named “Emerging Tech: Best 4 Security Pitfalls of GenAI”, Gartner clarifies the threats that appear with the common use of GenAI resources and systems. In accordance to the report, “The use of generative AI (GenAI) huge language versions (LLMs) and chat interfaces, specially connected to third-party options outdoors the organization firewall, symbolize a widening of attack surfaces and security threats to enterprises.”

Security has to be an enabler

Since non-human obtain is the immediate result of cloud adoption and automation – each welcomed developments contributing to growth and effectiveness, security need to assist it. With security leaders consistently striving to be enablers somewhat than blockers, an approach for securing non-human identities and their entry qualifications is no extended an solution.

Improperly secured non-human access, each external and interior, massively raises the probability of offer chain attacks, facts breaches, and compliance violations. Security guidelines, as properly as automatic instruments to implement them, are a have to for individuals who appear to protected this risky attack surface while allowing for the organization to reap the benefits of automation and hyper-connectivity.

Plan a live demo of Astrix – a leader in non-human id security

*In accordance to Astrix Exploration info, collected from business environments of companies with 1000-10,000 employees

Discovered this short article interesting? Abide by us on Twitter and LinkedIn to go through extra exceptional written content we put up.

Some elements of this posting are sourced from:

thehackernews.com

New MrAnon Stealer Malware Targeting German Users via Booking-Themed Scam

New MrAnon Stealer Malware Targeting German Users via Booking-Themed Scam